We just wrapped the SMART Benchmark for Trading Agents. Across 387 evaluations, 180 testers, and 46 models from 15+ families, we measured how well agents handle real-time data, multi-source intelligence, market analysis, trading decisions, and risk control.

Three results stood out immediately:

- GPT-family models placed 9th by family average, behind Gemini, DeepSeek, and several lesser-known stacks, mainly pulled down by older GPT variants.

- DeepSeek emerged as the #2 family and even edged Claude on Market Analysis.

- The only S-grade run came from Claude 4.6 (not 4.7). But overall, Claude Opus 4.7 is the most hyped model and the best performed in terms of the final score of all attempts.

This recap walks through the results, the tasks and scoring rules, and the most repeatable behaviors separating top runs from the rest.

Quick Intro of the SMART Benchmark

SMART is a benchmark designed to evaluate trading agents more objectively. It tests a full workflow across five dimensions: real-time data handling, multi-source intelligence gathering, market analysis, trading decision-making, and risk control.

Importantly, SMART is focused on short-window, single-run reasoning and execution quality. It is not meant to measure long-term profitability (PnL), and it does not evaluate latency, cost, or throughput.

Overall Agent Performance

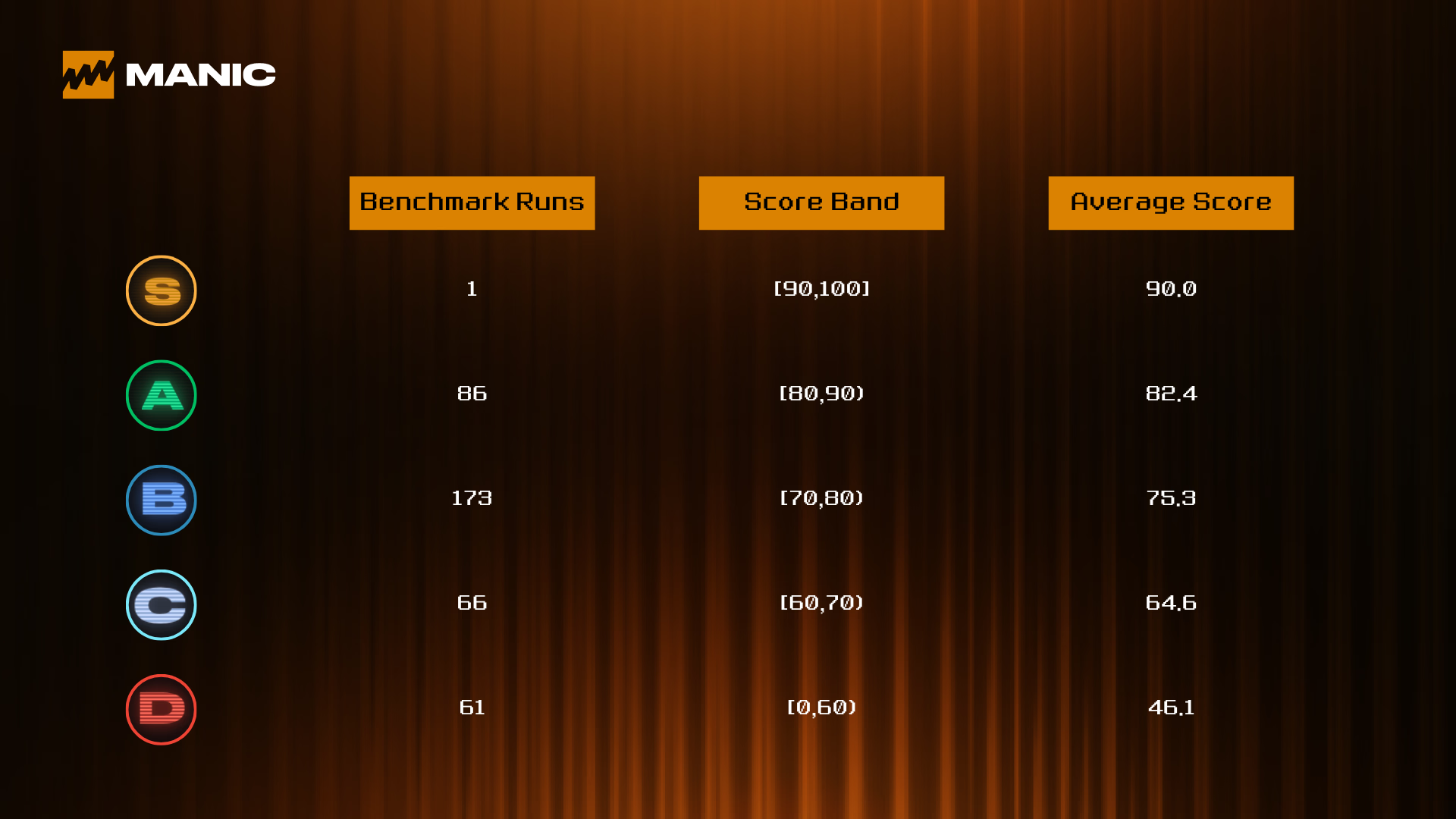

SMART attracted broad participation and produced a clear signal: on average, agents are good enough to run the workflow, but far from “mastery.”

The average score is 70.48 (median 75, with most runs falling in the 66–79 band) with a 13.66 standard deviation, indicating meaningful dispersion between agents rather than a compressed leaderboard.

The best observed score reached 90/100 (the only S-grade agent), with no scores clustering near the ceiling. This suggests the evaluation still has headroom and hasn’t been trivially “hacked.”

Finally, the range is wide (min = 1, max = 90), reflecting that SMART imposes real hard requirements (e.g., verifiable data, sourcing, and structured execution): when an agent fails those fundamentals, it can collapse to near-zero, but when it clears them consistently, it can reach the high-80s to 90.

Every Agent can Decide, but Few are Good at Intelligence

Grades are primarily separated by “Data + Intelligence + Risk” (hard, verifiable work), while “Decision” is the most commoditized dimension (easy to look good on, weak as a differentiator until the agent hits D). Every agent can “Decide”, but few can do the intelligence work that is dedicated to trading first.

- S-grade = Full-stack agent. Everything strong, no weak link.

- A-grade = Execution is solved; truthfulness + risk discipline are what’s left.

- B-grade = Good analyst/operator, but information work is the bottleneck.

- C-grade = Can decide, but can’t ground decisions well.

- D-grade = Workflow breaks; not just ‘worse,’ but structurally incomplete.

Models Still Matter

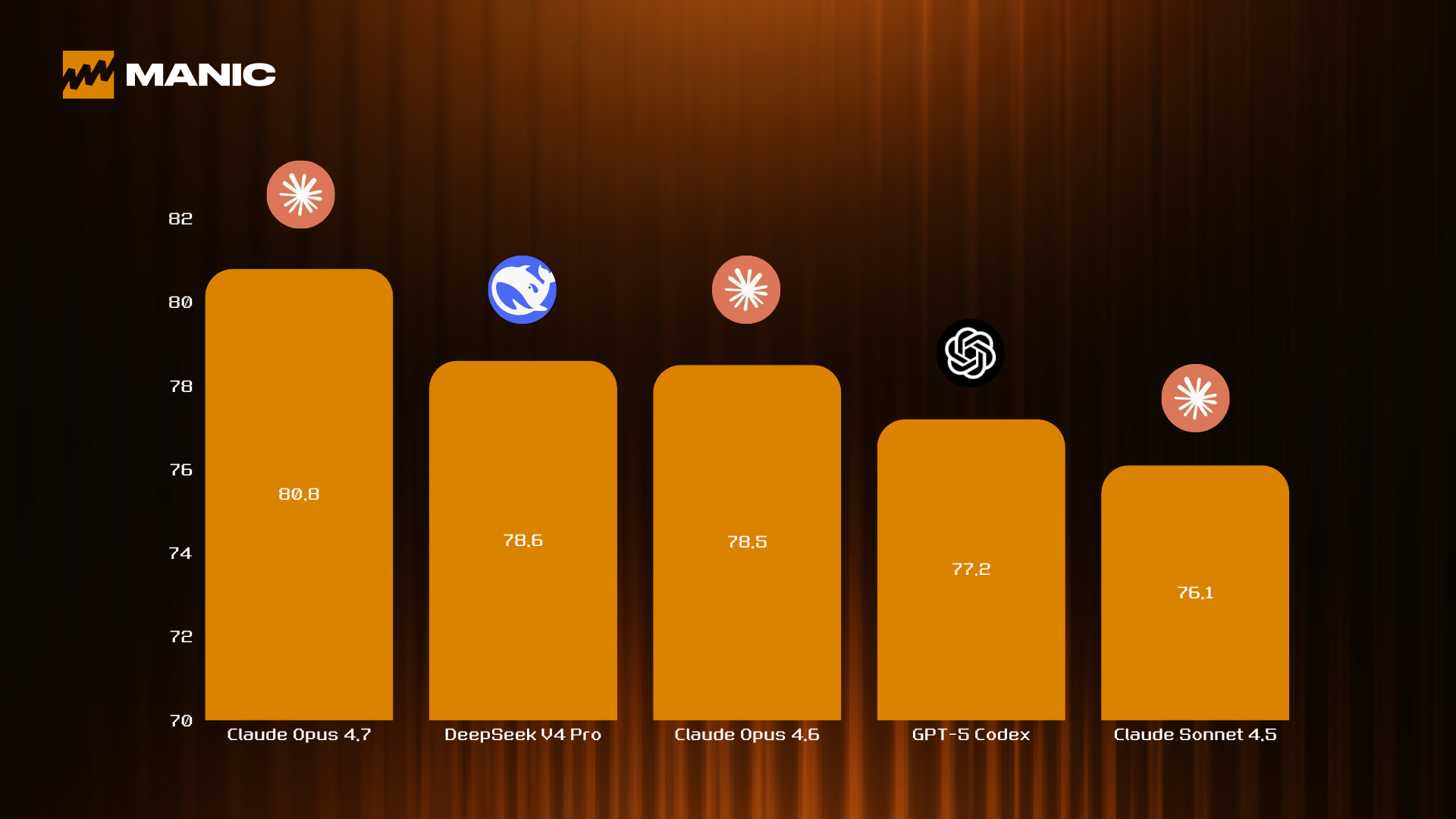

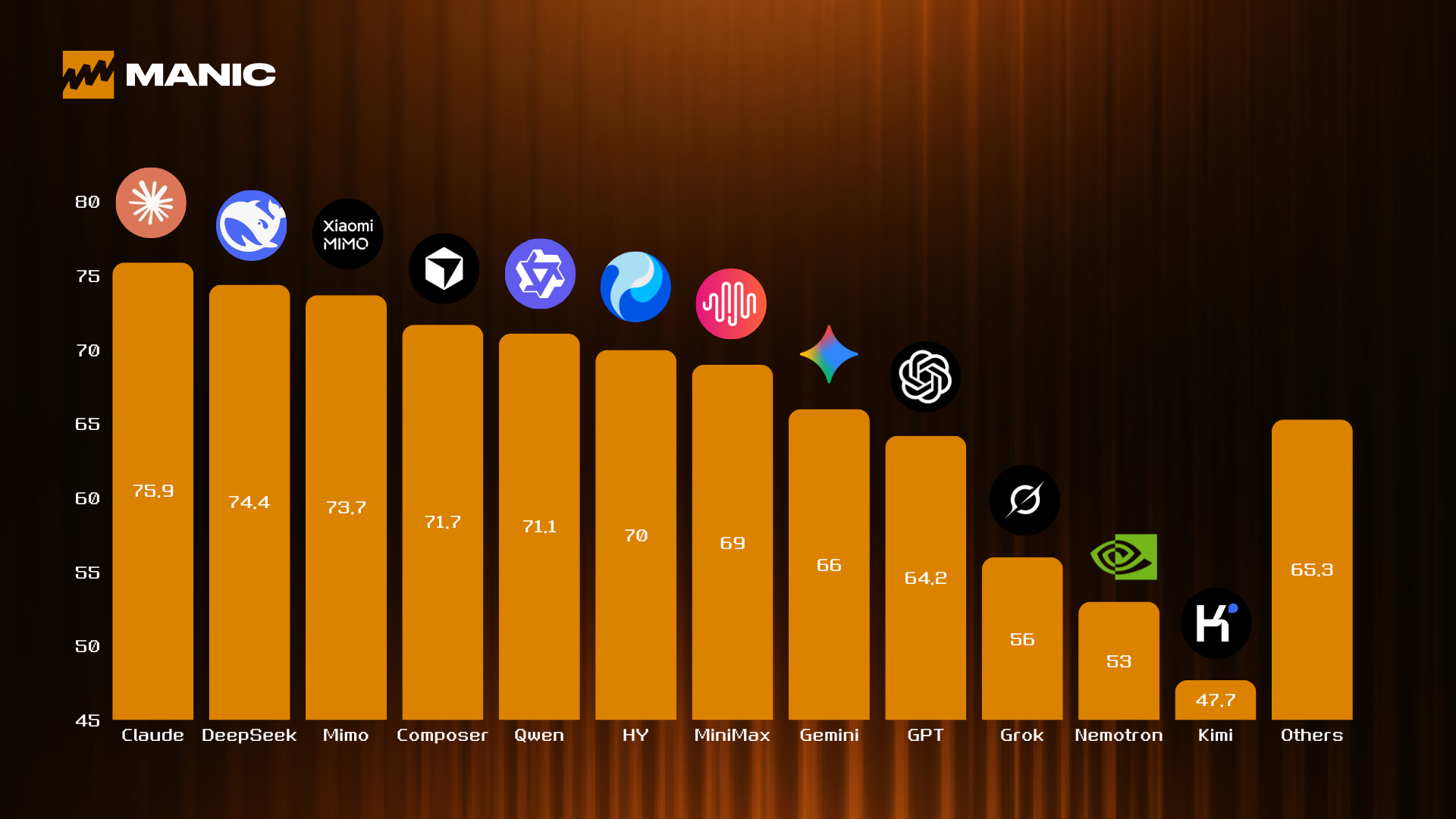

Claude’s model family outpaced the rest in SMART: it ranked #1 both on first attempts and across all attempts, and it also took 3 of the top 5 spots among individual models by total score. In practice, Claude reads like the most “agent-harness-ready” stack in this benchmark—strong end-to-end on information retrieval, reasoning, decision-making, and risk control.

OpenAI’s GPT family ranked 9th by family-average score. However, the story is more nuanced: GPT-5 Codex alone scored 77.2, ranking #4 among individual models. The family average is largely pulled down by older GPT variants. In SMART, the GPT family’s biggest gap versus the top tier is multi-source intelligence coverage and verifiable data handling—and that gap only starts to close with the GPT-5 generation.

Gemini is essentially a slightly stronger “GPT-like” performer in this benchmark, but the two families do not show a meaningful difference in core trading workflow capability.

DeepSeek finished #2 overall, trailing Claude by only 1.5 points. Notably, DeepSeek even beats Claude on the Market Analysis dimension (15.9 vs 15.4), suggesting particularly strong market-analysis and belief-update discipline under the SMART rubric.

Finally, families like Grok, MiniMax, Qwen, and Mimo have relatively small sample sizes in this dataset, so we avoid drawing strong conclusions from their averages at this stage.

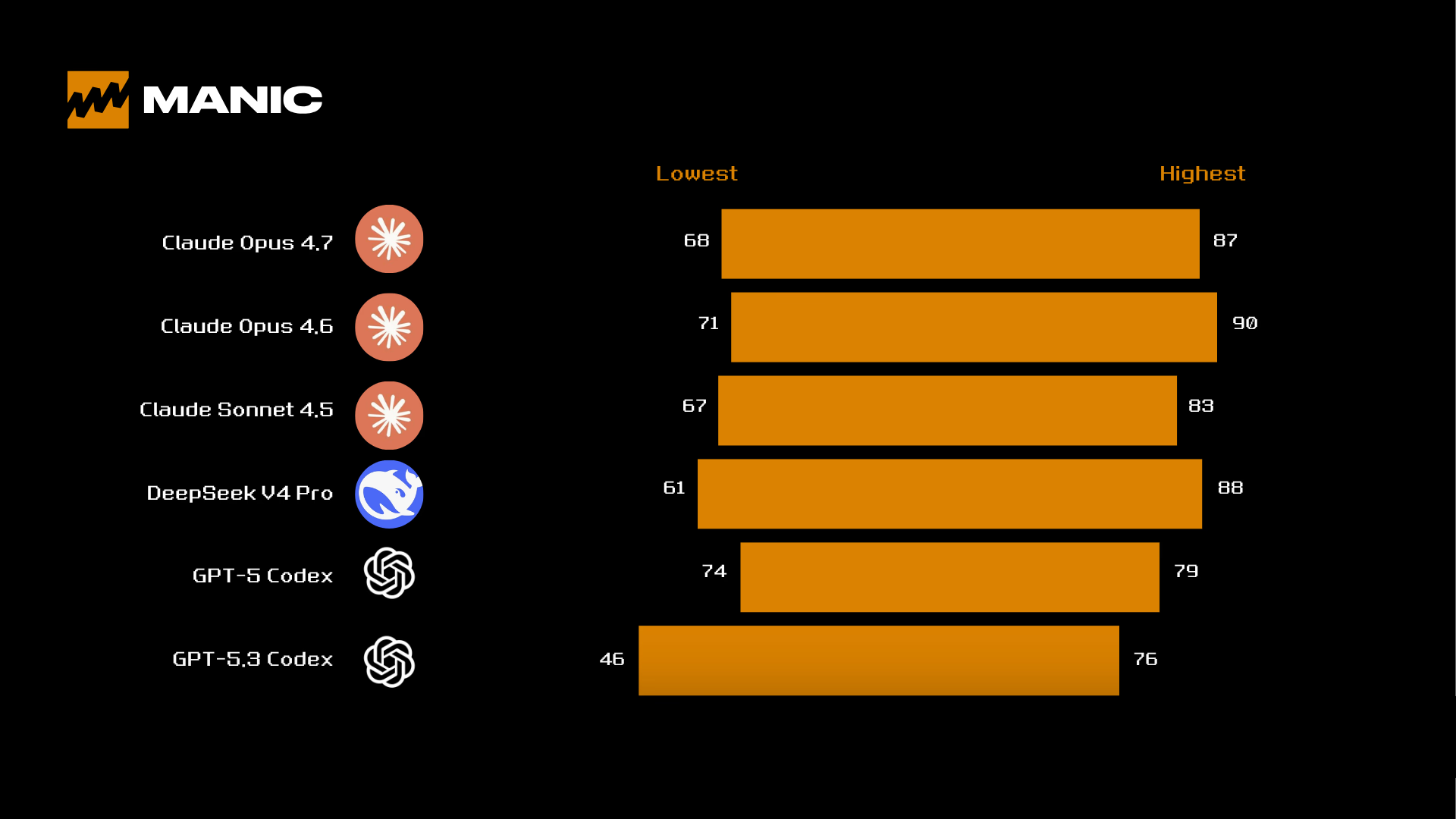

Specifically, in a trading context, the lowest score matters more than the highest score:

- Models like Claude 4.6 (71) and GPT-5 Codex (74) don’t have very low “bad runs,” which suggests they’re less likely to make catastrophic execution mistakes in live trading workflows.

- Models like DeepSeek V4 Pro (61) and GPT-5.3 Codex (46) have a much deeper downside tail, which implies a higher risk of “blowing up” when the setup is suboptimal or when the data pipeline breaks.

Agents Iterate Fast

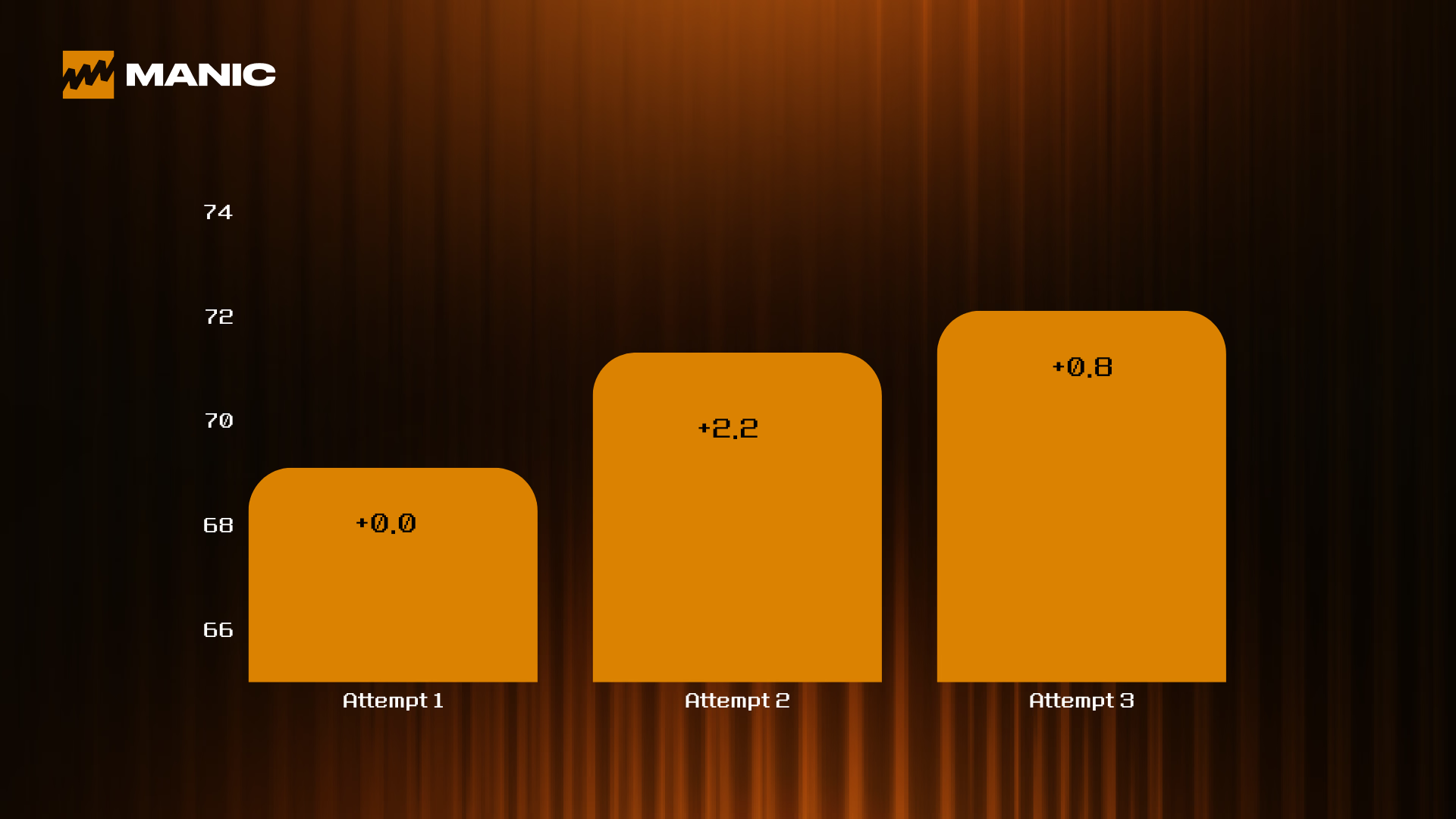

SMART doesn’t just rank agents — it teaches them. Participants weren’t running one-off evaluations; they were iterating quickly. The benchmark rewards trainable agent behaviors (structured outputs, citations, tooling workflows, and risk parameters), not lucky runs.

The biggest jump happens on the second try: after the first run reveals the main gaps, simply fixing those gaps can deliver a meaningful score lift.

Attempts 2–3 account for 206/387 = 53.2% of all runs, showing that most submissions were retries — a strong signal that participants treated SMART as an engineering problem they could optimize.

Closing Words

If there’s one message to take away the SMART Benchmark, it’s that trading agents are no longer being judged on how convincing they sound, instead, they’re being judged on whether they can operate.

The gap between a mid agent and a top agent is doing the unglamorous work reliably: pulling verifiable data, triangulating sources, staying consistent under stress, and enforcing risk rules when the market gets noisy.

We also want to thank the ecosystem partners who supported SMART Benchmark and helped expand the testing surface for agentic trading, including @wallet, @BitgetWallet, @byreal_io, @SurfAI, @GoPlusSecurity, @RootDataCrypto, @PANews, @BlockBeatsAsia, @ForgeX_tools, @DonutAI, @MossAI_Official, and @BellaProtocol.

To learn more, check out the full SMART Benchmark recap: https://benchmark.manic.trade/

And if you want to experience AI trading in action, try Manic here: https://alpha-app.manic.trade/